MCP vs API vs A2A: Which Protocol Actually Matters?

Three Protocols, Three Problems

If you're building anything with AI agents right now, you've probably heard people throwing around MCP, API, and A2A like they're interchangeable. They're not. Each one solves a fundamentally different problem.

- API is how software talks to software

- MCP is how AI models talk to tools and data

- A2A is how AI agents talk to other AI agents

Let's break each one down without the hype.

APIs: The Foundation

You already know what an API (Application Programming Interface) is. It's the contract between two pieces of software. REST, GraphQL, gRPC are all flavors of the same idea: define endpoints, send requests, get responses.

APIs are rigid by design. You need to know the endpoint, the schema, the auth method, and the exact shape of the request. That rigidity is a feature. It makes them predictable, testable, and debuggable.

When to use APIs:

- Service-to-service communication

- Well-defined, stable integrations

- When you control both sides of the contract

- Public data access (REST/GraphQL)

The limitation: APIs require the caller to know exactly what to call and how. An LLM doesn't naturally know your API schema. You have to hand it function definitions, describe parameters, and hope it formats the call correctly.

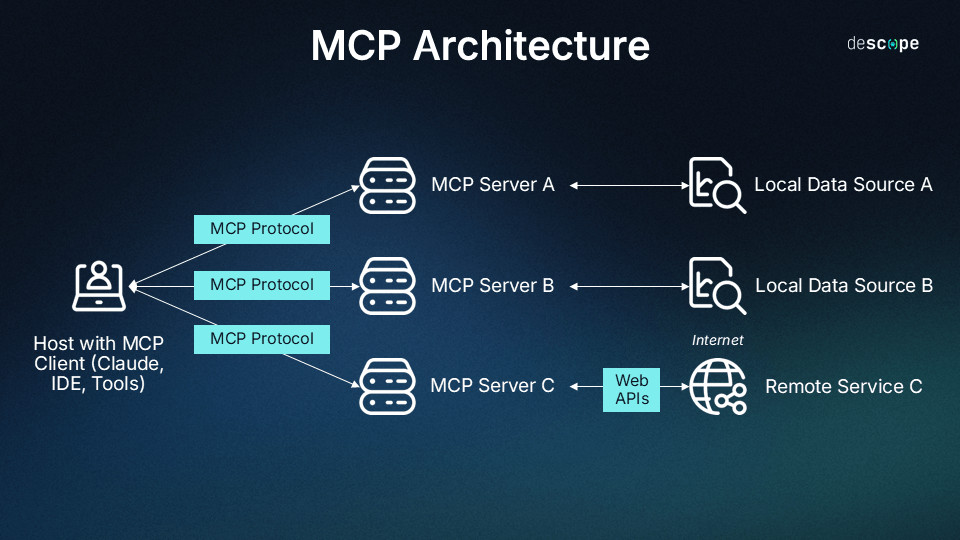

MCP: Giving AI Models Hands

Model Context Protocol (MCP) is an open standard created by Anthropic that solves one specific problem: how do you give an AI model structured access to external tools and data?

Think of MCP as a universal adapter between LLMs and the outside world. Instead of writing custom function-calling logic for every tool, MCP provides a standardized way for models to discover and use tools.

How MCP works:

- An MCP server exposes tools, resources, and prompts

- An MCP client (your AI app) connects to the server

- The AI model discovers what tools are available

- The model decides when and how to use them

The key insight: MCP is about tool use, not agent communication. It standardizes how a single AI model interacts with external capabilities like reading files, querying databases, calling APIs, and executing code.

When to use MCP:

- Building AI assistants that need access to external tools

- Creating reusable tool integrations (build once, use across any MCP-compatible client)

- When you want your LLM to dynamically discover and use capabilities

- IDE integrations, knowledge base access, workflow automation

Real-world example: Claude Code uses MCP servers to read files, search code, execute commands, and interact with your development environment. Each capability is an MCP tool that Claude can invoke when needed.

A2A: Agents Talking to Agents

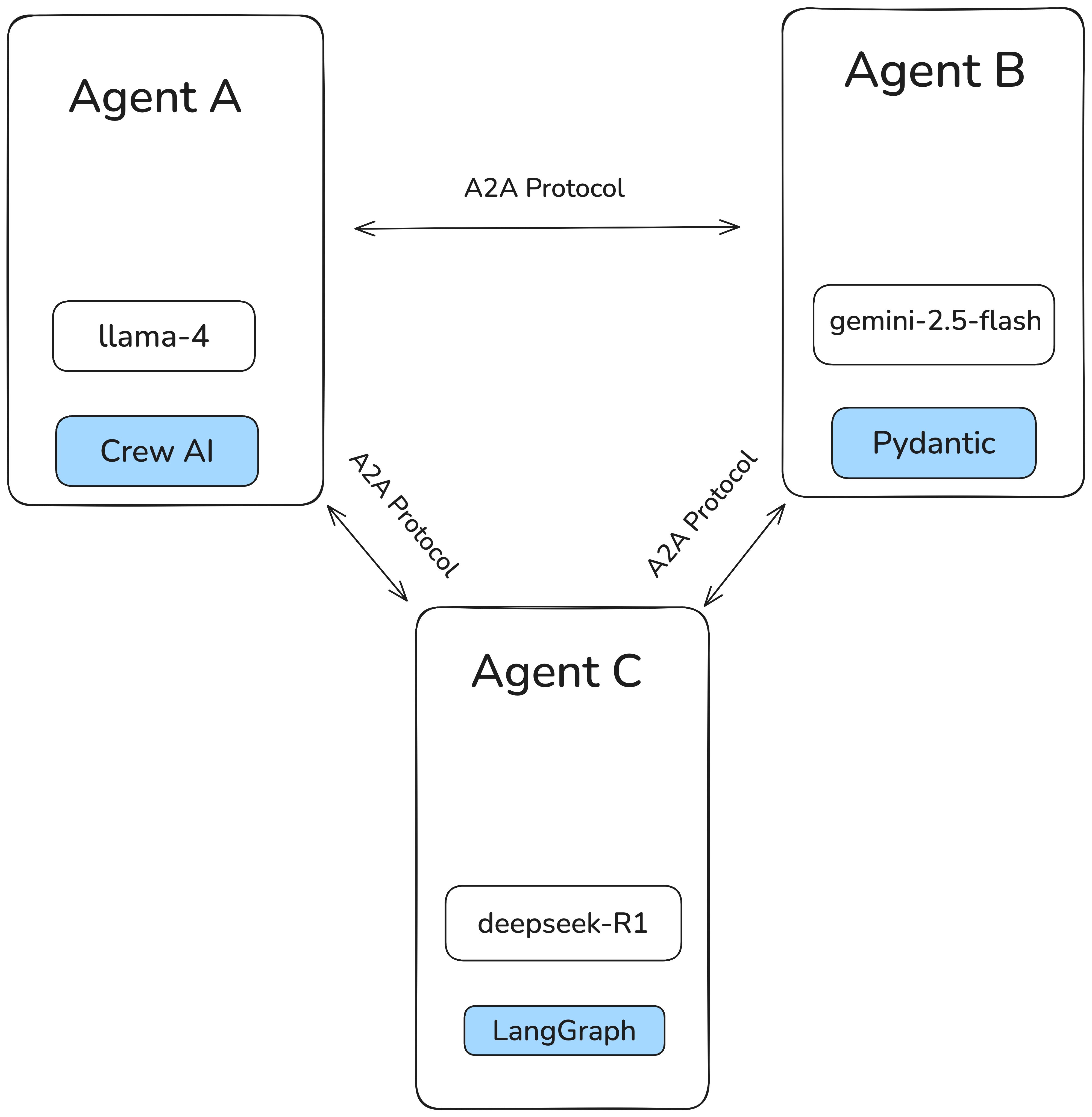

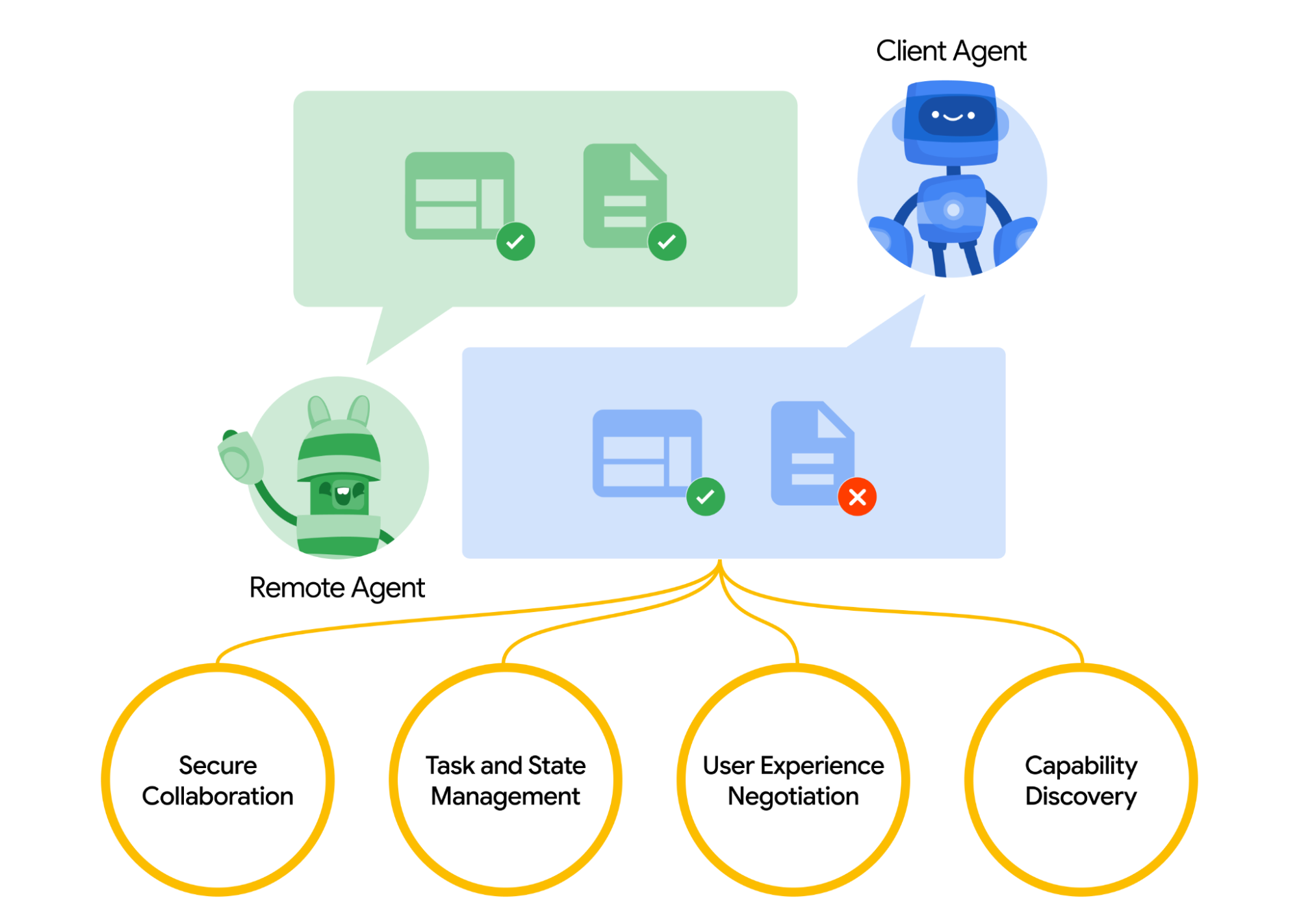

Agent-to-Agent (A2A) is Google's open protocol for multi-agent communication, announced in April 2025 with backing from over 50 technology partners. It solves a different problem entirely: how do independent AI agents collaborate on tasks?

MCP connects a model to tools. A2A connects an agent to other agents. As Google puts it, A2A complements MCP. MCP provides tools and context to agents, while A2A handles agent-to-agent orchestration.

How A2A works:

- Agents publish an Agent Card, a JSON file describing their capabilities, accepted inputs, and authentication methods

- A client agent discovers remote agents via their Agent Cards

- The client creates a task and sends it to the remote agent over HTTP using JSON-RPC

- Agents communicate through structured messages with parts (text, files, data), enabling UI negotiation (iframes, video, web forms)

- Tasks have a full lifecycle (submitted, working, completed/failed) with Server-Sent Events (SSE) for real-time streaming and push notifications for long-running work

Key A2A design principles:

- Embrace agentic capabilities that go beyond simple tool-use to enable true multi-agent collaboration

- Built on existing standards like HTTP, SSE, and JSON-RPC, so no exotic infrastructure is needed

- Secure by default with enterprise-grade auth matching OpenAPI's authentication schemes

- Modality agnostic, supporting text, audio, and video streaming, not just text

Key A2A concepts:

- Agent Card is like a business card for your agent. Published as JSON, discoverable by other agents

- Task is the unit of work. Has a lifecycle with real-time status updates

- Artifact is the output of a task (generated files, structured data, etc.)

- Parts are content chunks within messages with specified types, enabling format negotiation between agents

The ecosystem: A2A already has backing from Atlassian, Box, Cohere, LangChain, MongoDB, PayPal, Salesforce, SAP, ServiceNow, Workday, and many more. Major consulting firms (Deloitte, McKinsey, Accenture, PwC) are also on board, a strong signal that enterprise adoption is being taken seriously.

When to use A2A:

- Multi-agent systems where specialized agents handle different domains

- Cross-organization agent collaboration (your agent talks to a vendor's agent)

- Complex workflows that require delegation across agent boundaries

- When agents are built on different frameworks or by different teams

- Enterprise scenarios requiring standardized auth and long-running task management

The Real Comparison

Here's where people get confused. These aren't competing protocols. They operate at different layers.

| # | API | MCP | A2A |

|---|---|---|---|

| What talks | Software to Software | AI Model to Tools | AI Agent to AI Agent |

| Purpose | Data exchange | Tool use & context | Agent collaboration |

| Discovery | Documentation / OpenAPI spec | Dynamic tool discovery | Agent Cards |

| Intelligence | None (caller must know everything) | Model decides what to call | Both sides have intelligence |

| State | Stateless (typically) | Session-based | Task lifecycle |

| Standard | REST/GraphQL/gRPC | MCP (Anthropic) | A2A (Google) |

| Maturity | Decades old | Early but growing fast | Very early |

How They Work Together

Here's the thing. You'll probably use all three in a real system.

Picture this architecture:

- Your AI agent receives a user request

- It uses MCP to access local tools (search files, query your database, read documents)

- It calls APIs for simple, well-defined external services (weather, stock prices, sending emails)

- It delegates complex subtasks to specialized agents via A2A (a research agent, a coding agent, a data analysis agent)

MCP is the nervous system, connecting the brain (LLM) to its hands (tools).

APIs are direct phone lines. Quick, reliable, no intelligence needed on the other end.

A2A is the meeting room, where intelligent agents negotiate, delegate, and collaborate.

What Should You Actually Build With?

If you're building a single AI assistant, use MCP + APIs. Use MCP for tool integrations and APIs for external services. You don't need A2A yet.

If you're building a multi-agent system within one org, use MCP + APIs + maybe A2A. You could use direct function calls between agents, but A2A gives you a standard protocol if agents run as separate services.

If you're building agents that need to talk to external agents, A2A becomes essential. It's the only protocol designed for cross-boundary agent communication with proper discovery, auth, and task management.

If you're wrapping existing services for AI consumption, build an MCP server. It's the fastest way to make your service AI-accessible across any MCP-compatible client.

The Bottom Line

Stop thinking about these as competing standards. They're layers:

- APIs have been here for decades and aren't going anywhere

- MCP standardizes how AI uses tools. It's becoming the USB-C of AI integrations

- A2A standardizes how agents collaborate. It's early but solves a real problem as multi-agent systems grow

The winning architecture uses all three where they make sense. Don't over-engineer. Start with APIs and MCP, and add A2A when you actually have multiple agents that need to talk.

Build for what you need today. The protocols will mature, but the layered thinking won't change.

Building AI systems in production? Let's talk about getting your architecture right.

Want to work together?

I help businesses build AI automation solutions that deliver real results.

Get in Touch